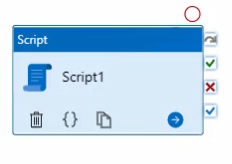

I’ve stumbled upon a brand new feature in Azure Data Factory. …

I’ve stumbled upon an error a few weeks ago when working with a …

Do you want to learn Databricks and do you want to read only one book …

Yes, you read it correctly. You can travel in time on your data using …