Hello and welcome back to the SQL Server Performance Tuning seriesn. In the last 2 weeks we have talked about pessimistic and optimistic locking in SQL Server. Today I want to talk about one phenomenon in SQL Server that is specific to locking: so-called Lock Escalations. Before we go into the details of that problem, I want to talk a little bit more about the Lock Hierarchy that SQL Server uses internally.

Lock Hierarchy

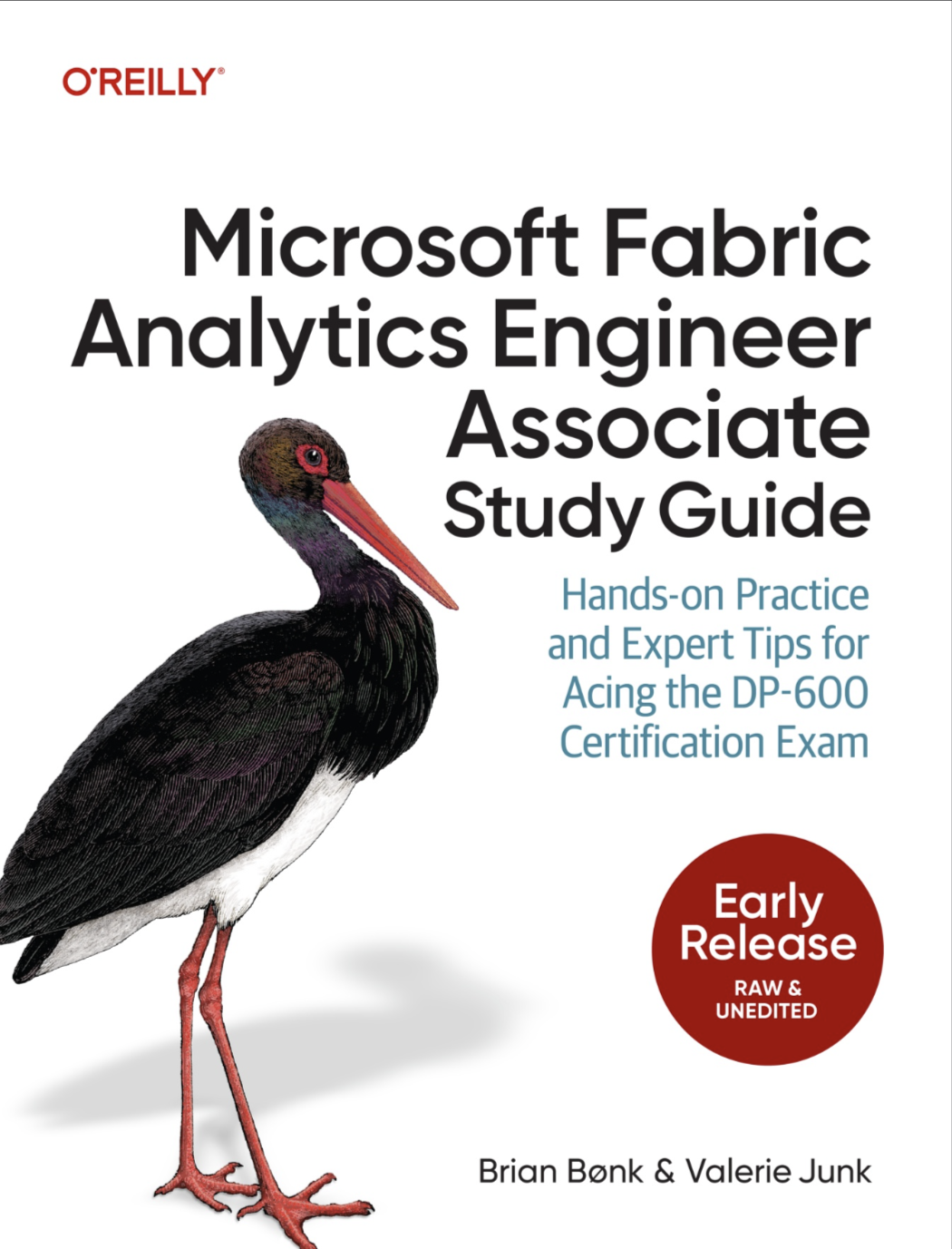

When we started talking about pessimistic concurrency 2 weeks ago, I told you that SQL Server acquires shared and exclusive locks on a record level. Unfortunately that’s not the complete truth. The whole truth is that SQL Server acquires locks at different granularities, such as the database, the various pages, and finally at the record level. SQL Server implements a complete lock hierarchy, which is shown in the following picture.

As soon as you use a database, your session acquires a shared lock on that database. That shared lock is needed, so that no one else can drop the database, or restore a backup. These operations will just block, because of your open session. As well as the shared and exclusive locks at the row level, SQL Server uses so-called Intent Locks on the table and page level.

- With shared locks at the row level, you get an IS (intent shared lock) on the table and page

- With exclusive lock at the row level, you get an IX (intent exclusive lock) on the table and page

The intent locks are used to signal that a (possibly) incompatible lock has already been granted at a lower level in the lock hierarchy. Intent locks are mainly a performance optimization by a relational database. Without them the lock manager has to go through the complete lock list at a lower level to determine if a lock at a higher level can be granted. If you have an IX lock at the table level, you can’t acquire an X lock at the table level, because some records are already exclusively locked in the table itself: the acquiring of the X lock at the table level will block, because of the IX lock at the table level.

Unfortunately this multigranularity locking doesn’t come for free: every lock needs 96 bytes in SQL Server, and therefore consumes some memory. SQL Server has to make sure that no query uses too much lock space memory, because otherwise memory can be exhausted. That’s why the concept of lock escalations exists.

Lock Escalations

Imagine the following scenario: you update 1 million of records that are spread across 200.000 data pages. In that case you need 1.000.000 X locks on the records themselves, 200.000 IX locks on the various pages, and one IX lock on the table itself. In sum your query acquires 1.200.001 locks, which needs around 110 MB of lock space in the lock manager - just for one single query. Regarding the memory footprint this approach is very dangerous. For that reason SQL Server triggers a lock escalation as soon as you have acquired more than 5.000 locks at one level - such as the record level. In that case SQL Server escalates your individual fine grained row level locks to one coarse grained table lock:

- Individual X locks are escalated to 1 table X lock

- Individual S locks are escalated to 1 table S lock

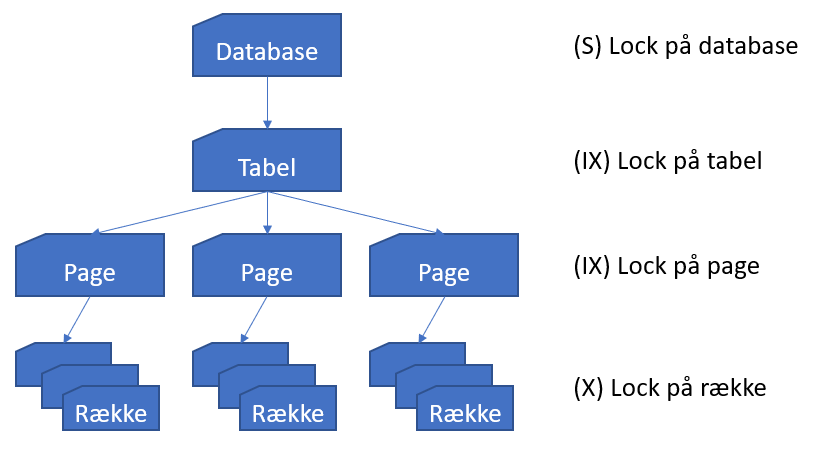

The following picture shows the locks held before and after a lock escalation occurred.

The memory footprint is of course decreased by lock escalations - but this also affects the concurrency of your database! A X lock at a table level means that no one else is able to read and write to/from your table, a S lock at a table level means that your table is read only, and nobody else can write to it anymore! The throughput of your database will just decrease!

SQL Server triggers a lock escalation when you have acquired more than 5.000 locks at one level. That’s a hardcoded limit than can’t be changed by any configuration option. Since SQL Server 2008 you are able to control lock escalations through the ALTER TABLE DDL statement as the following code snippet shows.

|

|

By default SQL Server always escalates to the table level (option TABLE). If you set the escalation option to AUTO, SQL Server can escalate to the partition level, when your table is partitioned. But you have to be very careful with that option, because it can result in deadlocks, if you are accessing the partitions in the wrong order. And with the option DISABLE you can disable lock escalations for the table - with all the various side-effects (regarding memory consumption) as mentioned previously. The question now is, how can you change or delete more than 5.000 rows in a table effectively without triggering lock escalations?

- Gradually update/delete less than 5.000 rows (e.g. in a WHILE loop)

- Use partition switching, if your table is partitioned

- Temporarily disable lock escalations, but be aware of the memory consumption in the meantime

Summary

Lock Escalations are a safety net provided by SQL Server. There is a good reason why that concept exists, but this introduces the side-effect of less concurrency, when an escalation has happened. Therefore you have to be very careful when you write code that deals with more than 5.000 rows in one touch. Maybe you are able to perform your work on these records gradually step by step instead of performing one big UPDATE or DELETE statement.