Kql Data Live Copy to Onelake

Use your KQL data everywhere in Fabric

Microsoft has released the final piece of the current puzzle around the OneLake as a one-stop-shopping service for dat in Fabric. Until now we had only access to the KQL data in the KQL database.

With this addition, we can now finally say that OneLake is the one place for your data in Fabric.

How to set it up

You can set this new featire up at two levels in your KQL database - either on database level or table level.

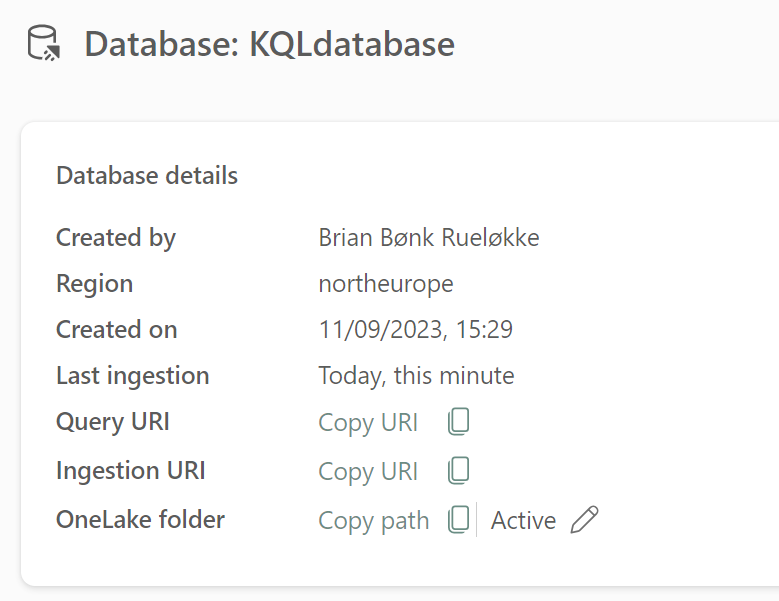

The database level configuration is found at the “database details” part of the KQL database and then in the bottom line you will find the OneLake folder option. In below screenshot current status is “Enabled”.

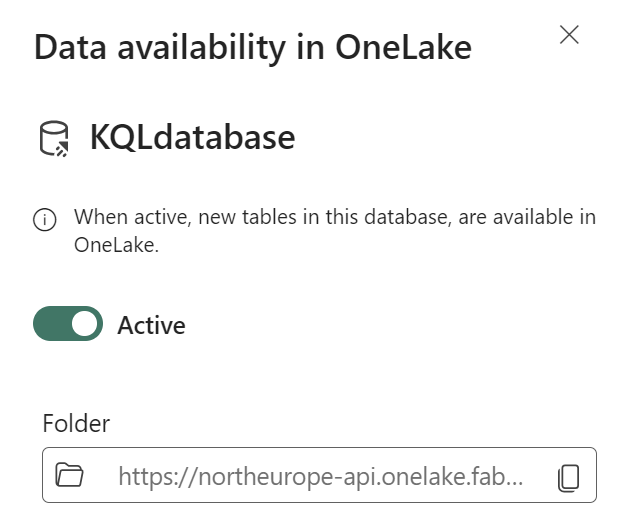

From here you simply turn on the trigger and you can also select a special folder in your OneLake for the data availability.

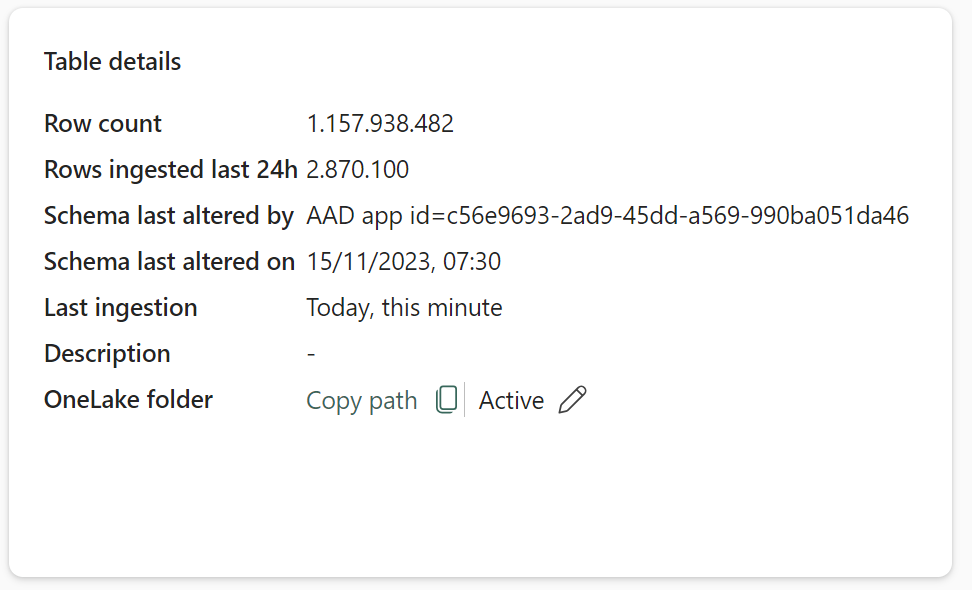

The table level is configured the same place as the database level, just select the table and find the “table details” area and then the buttom line of elements:

Use the KQL data from OneLake

After the setup the data will be available. It might take around 10 mins for the data to be visible in the OneLake structure, so just be patient.

You can then choose to use the data as is directly from the OneLake. Data will be exposed and Delta Parquet format.

You can also choose to link the data to a Lakehouse using the Shortcut feature as shown below:

.png)

.png)

.png)

.png)

Hit the OK button and the shortcut is creating.

Now you have to wait agin. The first load of the data from the OneLake shortcut will give you “Unidentified” folder with the raw Parquet files as content.

But just wait - it will resolve…

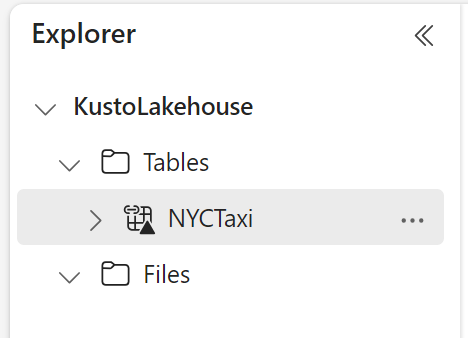

I do hope this will be resolved later on, so we don’t have to wait that long for the data to be used in a shortcut. My patience was not that good, so I tried to create a new Lakehouse (named KustoLakehouse) and recreated the shortcut there. It resolved after 20 mins of waiting time.

Here is my (new) Lakehouse after some waiting time:

Now you can use the stored data from your KQL database tables in every other service in Fabric using your known tools like Lakehouse, Warehouse and Power BI.

But - you cannot (yet) see the data in your OneLake file explorer - here the content of the folder will remain empty. I could not made it work on current version of the OneLake file explorer app even after several restarts of the application. Perhaps this will change in the future.

Pricing

We know that the price for storage in OneLake is 23USD/TB/Month (as this post is written). So are we now billed the same storage twice when using this feature?

Big no… Fortunately

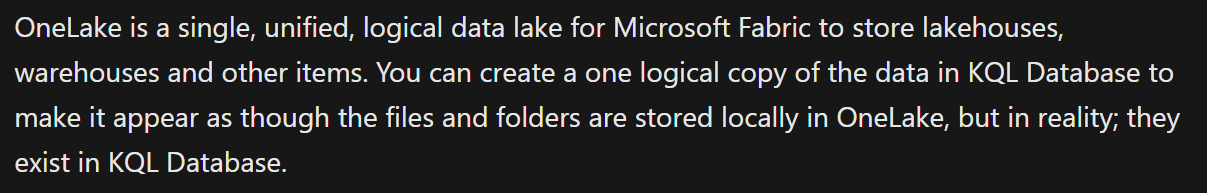

According to the Microsoft documentation the data from the KQL database is really not copied over to the OneLake - it is only made available to the OneLake struture and service. The data is still stored in the KQL database, but made available as Delta Parquet format to the OneLake.

Here a screenshot from the documentation:

But - then we need to think about the compute of each query you run against your KQL data from OneLake. This will now, as I read it, be billed as KQL data seconds (my own term, sorry).

Use cases

I hope the use cases are obvious - use the KQL data directly in other services without knowing KQL scripting language (hint - you can actually use SQL against the KQL database…).

I hope you enjoyed this fast runthrough of the new KQL data in OneLake feature.

Happy coding…