Ever been as frustrated as I have when importing flat files to a SQL Server and the format suddenly changes in production?

Commonly used integration tools (like SSIS) are very dependent on the correct, consistent and same metadata when working with flat files.

So I’ve come up with an alternative solution that I would like to share with you.

When implemented, the process of importing flat files with changing metadata is handled in a structured, and most important, resiliant way. Even if the columns change order or existing columns are missing.

Background

When importing flat files to SQL server almost every standard integration tool (including TSQL bulkload) requires fixed metadata from the files in order to work with them.

This is quite understandable, as the process of data transportation from the source to the destination needs to know where to map every column from the source to the defined destination.

Let me make an example:

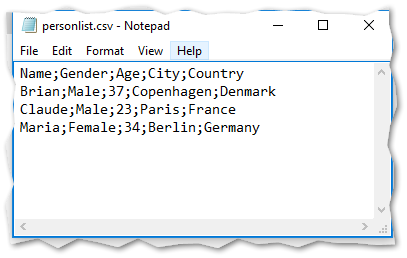

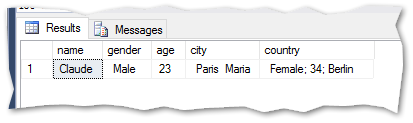

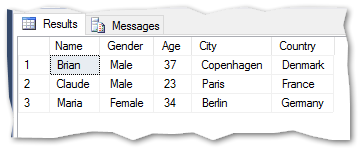

A source flat file table like below needs to be imported to a

SQL server database.

This file could be imported to a SQL Server database (in this example named FlatFileImport) with below script:

|

|

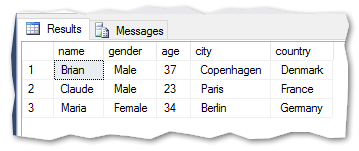

The result:

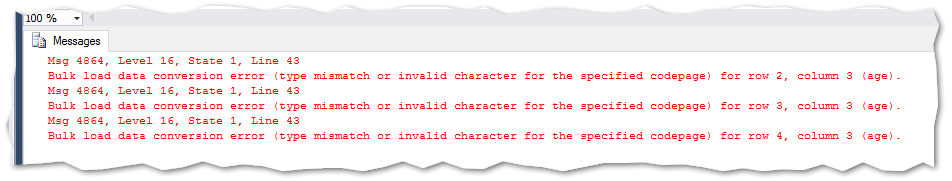

If the column ‘Country’ would be removed from the file after the import has been setup, the process of importing the file would either break or be wrong (depending on the tool used to import the file) The metadata of the file has changed.

|

|

With this example, the import seems to go well, but upon browsing the data, you’ll see that only one row is imported and the data is wrong.

The same would happen if the columns ‘Gender’ and ‘Age’ where to switch places. Maybe the import would not break, but the mapping of the columns to the destination would be wrong, as the ‘Age’ column would go to the ‘Gender’ column in the destination and vice versa. This due to the order and datatype of the columns. If the columns had the same datatype and data could fit in the columns, the import would go fine – but the data would still be wrong.

|

|

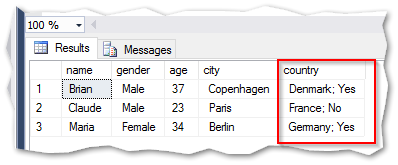

When importing the same file, but this time with an extra column (Married) – the result would also be wrong:

|

|

The result:

The above examples are made with pure TSQL code. If it was to be made with an integration tool like SQL Server Integration Services, the errors would be different and the SSIS package would throw more errors and not be able to execute the data transfer.

The cure

When using the above BULK INSERT functionality from TSQL the import process often goes well, but the data is wrong with the source file is changed.

There is another way to import flat files. This is using the OPENROWSET functionality from TSQL.

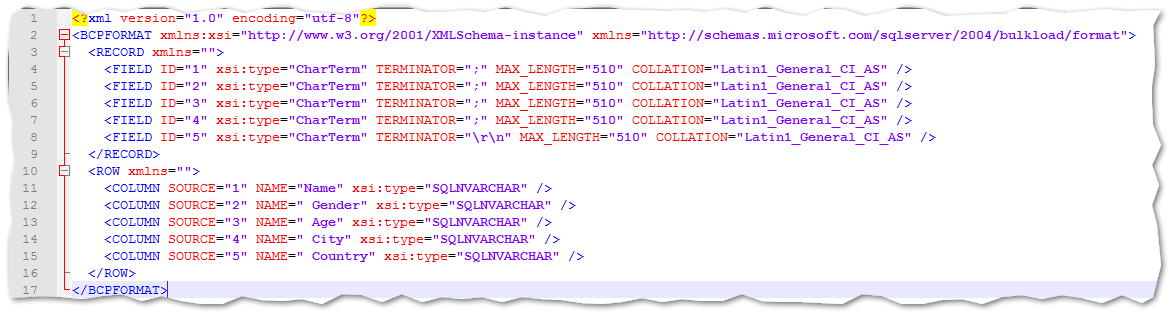

In section E of the example scripts from MSDN, it is described how to use a format file. A format file is a simple XML file that contains information of the source files structure – including columns, datatypes, row terminator and collation.

Generation of the initial format file for a curtain source is rather easy when setting up the import.

But what if the generation of the format file could be done automatically and the import process would be more streamlined and manageable – even if the structure of the source file changes?

From my GitHub project you can download a home brewed .NET console application that solves just that.

If you are unsure of the .EXE files content and origin, you can download the code and build your own version of the GenerateFormatFile.exe application.

Another note is that I’m not hard core .Net developer, so someone might have another way of doing this. You are very welcome to contribute to the GitHub project in that case.

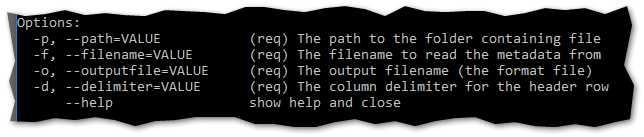

The application demands inputs as below:

Example usage:

generateformatfile.exe -p c:\source\ -f personlist.csv -o personlistformatfile.xml -d ;

The above script generates a format file in the directory c:\source\ and names it personlistFormatFile.xml.

The content of the format file is as follows:

The console application can also be called from TSQL like this:

|

|

If by any chance the xp_cmdshell feature is not enabled on your local machine – then please refer to this post from Microsoft: Enable xp_cmdshell

Using the format file

After generation of the format file, it can be used in TSQL script with OPENROWSET.

Example script for importing the ‘personlist.csv’

|

|

This loads the data from the source file to a new table called ‘personlist_bulk’.

From here the load from ‘personlist_bulk’ to ‘personlist’ is straight forward:

|

|

Load data even if source changes

The above approach works if the source is the same every time it loads. But with a dynamic approach to the load from the bulk table to the destination table it can be assured that it works even if the source table is changed in both width (number of columns) and column order.

For some the script might seem cryptic – but it is only a matter of generating a list of column names from the source table that corresponds with the column names in the destination table.

|

|

The result is a TSQL statement what looks like this:

|

|

The exact same thing would be able to be used with the other source files in this demo. The result is that the destination table is correct and loaded with the right data every time – and only with the data that corresponds with the source. No errors will be thrown.

From here there are some remarks to be taken into account:

As no errors are thrown, the source files could be empty and the data updated could be blank in the destination table. This is to be handled by processed outside this demo. Further work As this demo and post shows it is possible to handle dynamic changing flat source files. Changing columns, column order and other changes, can be handled in an easy way with a few lines of code.

Going from here, a suggestion could be to set up processes that compared the two tables (bulk and destination) and throws an error if X amount of the columns are not present in the bulk table or X amount of columns are new.

It is also possible to auto generate missing columns in the destination table based on columns from the bulk table.

The only boundaries are set by limits to your imagination

Summary With this blogpost I hope to have given you inspiration to build your own import structure of flat files in those cases where the structure might change.

As seen above the approach needs some .NET programming skills – but when it is done and the console application has been built, it is simply a matter of reusing the same application around the different integration solutions in your environment.